How to Find Duplicate Addresses: A Step-by-Step Guide

Anyone who regularly sends letters, catalogs, or fundraising appeals knows the problem: names appear twice or three times in the address list. Sometimes it is obvious, but often duplicates hide behind different spellings, swapped fields, or missing data.

The consequences are measurable. Every duplicate address costs postage, printing, and shipping materials. For a mailing with 20,000 recipients and a 10 percent duplicate rate, 2,000 mailings go to people who already received the letter. At EUR 0.28 per piece, that amounts to EUR 560 per mailing. With monthly dispatches, that adds up to roughly EUR 6,700 per year.

This article walks you through six steps to systematically find and eliminate duplicate addresses in your database.

Step 1: Take Stock – How Bad Is It Really?

Before you start cleaning, you need a realistic picture of the situation. Here are typical experience values from practice:

| Data Source | Typical Duplicate Rate |

|---|---|

| Single CRM system, well maintained | 3–5% |

| CRM after data migration | 8–15% |

| Merged lists from multiple sources | 12–25% |

| Historically grown association database | 10–20% |

| Purchased or rented address lists | 5–12% |

A simple method for a quick check: sort your list by last name and postal code. Scroll through the sorted data. If you spot obvious duplicates within a few minutes, the actual rate is significantly higher – because subtle duplicates are invisible to the naked eye.

Count the obvious hits and multiply by a factor of 3 to 5. That gives you a reasonable estimate of the real duplicate volume.

Step 2: Prepare and Normalize Your Data

Duplicate addresses hide behind formatting differences. Before you search for duplicates at all, the data must be brought into a uniform format.

What Normalization Looks Like in Practice

Before: After:

Dr. Max Müller → Max Mueller

Hauptstr. 12a → Hauptstrasse 12a

70001 Stuttgart → 70001 Stuttgart

Prof. MAX MUELLER → Max Mueller

Hauptstraße 12 A → Hauptstrasse 12a

70001 Stuttgart → 70001 Stuttgart

After normalization, both entries look nearly identical – which they did not before.

Key Normalization Rules

| Rule | Before | After |

|---|---|---|

| Resolve umlauts | Müller, Böhm, Jäger | Mueller, Boehm, Jaeger |

| Standardize case | MAX MUELLER, mueller | Mueller |

| Remove titles | Dr., Prof., Dipl.-Ing. | (removed) |

| Expand street abbreviations | Str., Strasse | Strasse |

| Trim whitespace | " Max Mueller " | "Max Mueller" |

| Standardize house numbers | 12 a, 12A, 12/a | 12a |

| Remove special characters | Müller-Schmidt | Mueller Schmidt |

Without normalization, every subsequent step fails. Even the best matching algorithm will rate "Dr. Max Müller" and "MAX MUELLER" as only vaguely similar, even though they are clearly the same person.

In Excel, you can implement basic normalization with formulas – such as =PROPER(TRIM(A2)) for whitespace and casing. For umlaut replacement you need nested SUBSTITUTE functions. Beyond a certain complexity, this approach quickly becomes error-prone and hard to maintain.

Step 3: Define Key Fields

Not all address fields carry equal weight for duplicate detection. Comparing every field equally either produces too many false positives or misses real duplicates.

The Right Field Weighting

High relevance:

Last name → Core identification

Street → Location-based assignment

Postal code → Geographic classification

Medium relevance:

First name → Disambiguation for common surnames

House number → Precision within a street

Low relevance:

City → Redundant with correct postal code

Salutation → No identification value

Company → Only relevant for B2B lists

A proven strategy: build a search key from last name + postal code as a pre-filter. All records sharing the same key enter the candidate pool. Then apply the more precise methods only to those candidate pairs.

Example search keys:

"Mueller|70001" → Finds: Max Mueller, M. Mueller, Petra Mueller-Schmidt

"Schmidt|10115" → Finds: Hans Schmidt, H. Schmitt, Hannelore Schmidt

This simple approach alone drastically reduces the number of comparisons. Instead of 20,000 x 20,000 = 400 million pair comparisons, you only check records within each key group – typically just a few thousand comparisons total.

Step 4: Apply Comparison Methods

With normalized data and defined key fields, you can start the actual duplicate search. Three methods have proven effective in practice:

Exact Comparison

The simplest approach: character-by-character comparison. Finds only identical entries. Useful as a first quick pass but catches only 10 to 20 percent of actual duplicates.

Phonetic Comparison

Algorithms like Cologne Phonetics convert names into sound codes. "Meyer", "Meier", and "Maier" receive the same code and are flagged as potential duplicates.

Cologne Phonetics:

"Meyer" → 67

"Meier" → 67

"Maier" → 67

"Müller" → 657

"Miller" → 657

Phonetic methods excel at name variants but have limits with addresses – "Hauptstrasse" and "Lindenweg" do not sound alike, nor should they.

Fuzzy Matching

The most powerful method. Algorithms like Levenshtein or Jaro-Winkler compute a similarity score between 0 and 100 percent:

Comparison 1:

"Max Mueller, Hauptstrasse 12, 70001"

"Max Mueller, Hauptstr 12, 70001"

→ Similarity: 92% → Duplicate

Comparison 2:

"Max Mueller, Hauptstrasse 12, 70001"

"Hans Weber, Lindenweg 5, 80331"

→ Similarity: 18% → Not a duplicate

Comparison 3:

"Max Mueller, Hauptstrasse 12, 70001"

"Petra Mueller, Hauptstrasse 12, 70001"

→ Similarity: 84% → Review case (same household?)

The threshold above which a pair counts as a duplicate typically falls between 80 and 90 percent. The optimal value must be calibrated to your specific dataset – too low generates false positives, too high lets real duplicates slip through.

For a deeper dive into the individual algorithms and their strengths, read our article Detecting Duplicates: 7 Methods for Clean Address Data.

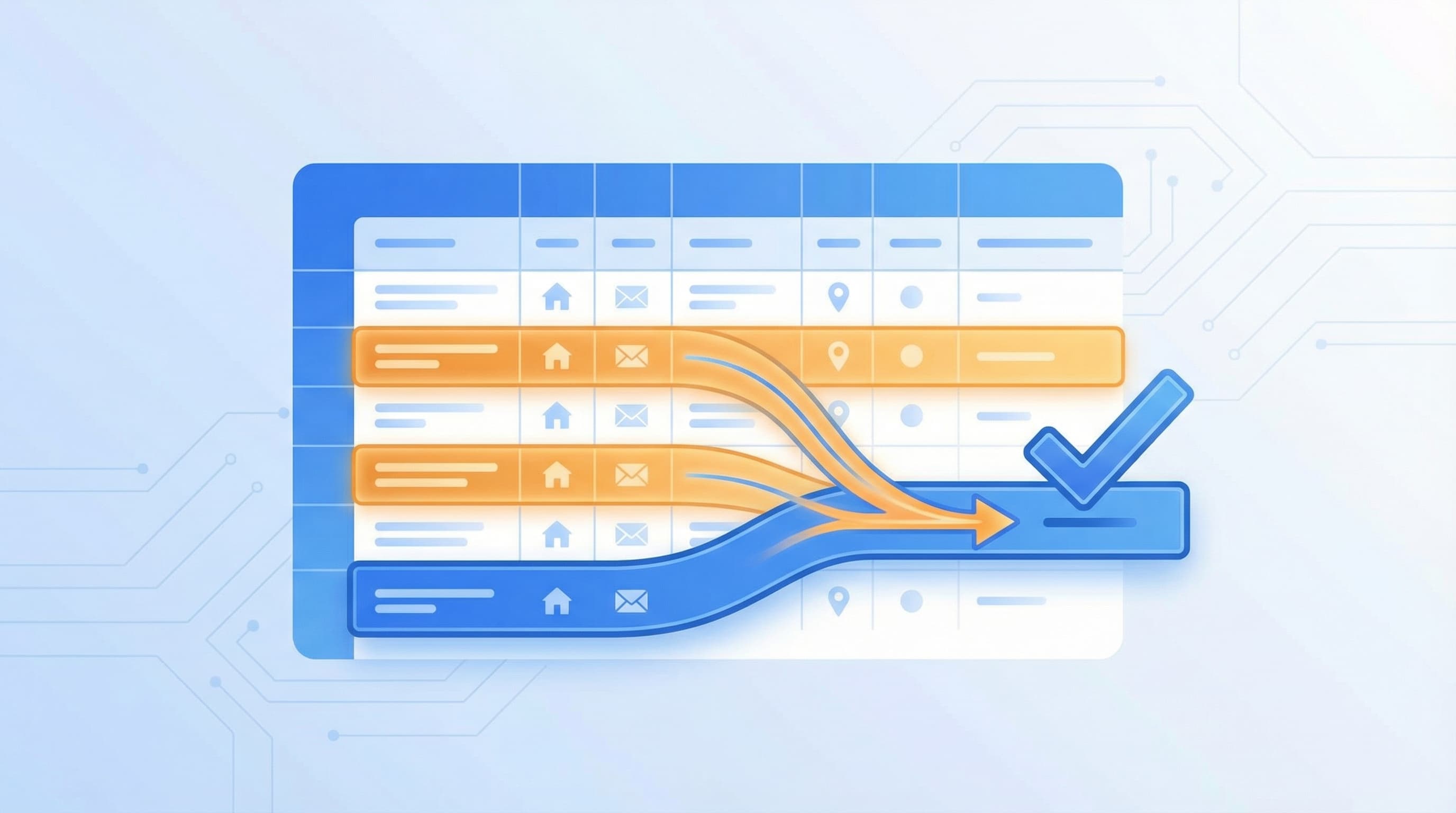

Step 5: Review Results and Merge Records

The automated search delivers a list of duplicate candidates. Now the real work begins: which hits are genuine duplicates, and which record should be kept?

Three Typical Decision Scenarios

Scenario 1 – Clear duplicate:

A: Max Mueller | Hauptstrasse 12 | 70001 Stuttgart | Phone: 0711-123456

B: Max Mueller | Hauptstrasse 12 | 70001 Stuttgart | Phone: —

→ Keep A (more complete record)

Scenario 2 – Complementary information:

A: Max Mueller | Hauptstrasse 12 | 70001 Stuttgart | Phone: 0711-123456

B: M. Mueller | Hauptstr. 12 | 70001 Stuttgart | Email: max@example.de

→ Merge: Full name from A, email from B

Scenario 3 – Household, not a duplicate:

A: Max Mueller | Hauptstrasse 12 | 70001 Stuttgart

B: Petra Mueller | Hauptstrasse 12 | 70001 Stuttgart

→ Not a duplicate but two people at the same address

Scenario 3 highlights a common pitfall: people with the same last name at the same address are not necessarily duplicates. For postage optimization, the information is still valuable – instead of sending two letters to "Max Mueller" and "Petra Mueller", you send one to "The Mueller Family". Tools like ListenFix detect such household relationships automatically and offer the option to send just one mailing per household.

Rules for Merging

Define in advance which record takes priority:

- Most recent entry wins – ideal for CRM data with timestamps

- Most complete entry wins – the record with the most populated fields

- Source decides – web shop data takes precedence over imported lists

- Manual review – for conflicting information (different phone numbers, different addresses)

Step 6: Establish Ongoing Monitoring

Cleaning once is not enough. New duplicates emerge daily through manual entry, web forms, data imports, and CRM syncing.

Prevent Duplicates at the Source

| Measure | Effect |

|---|---|

| Required fields in web forms | Prevents incomplete entries |

| Postal code validation on input | Reduces erroneous addresses |

| Real-time duplicate check on creation | Warns before saving |

| Uniform data entry guidelines | Minimizes format variations |

| Regular cleaning runs (quarterly) | Catches duplicates that slip through |

A quarterly cleaning run strikes a good balance between effort and data quality. Those who mail more frequently – monthly, for example – should run the check before each dispatch.

Manual cleaning in Excel quickly becomes impractical as data volumes grow. For details on why Excel falls short in duplicate detection, see our article Removing Address Duplicates: Why Excel Is Not Enough. Professional tools like ListenFix automate steps 2 through 5 of this guide: upload your CSV or Excel file, start the analysis, and receive a cleaned list within seconds – complete with a log of detected duplicates. All processing happens locally on your computer, meaning your address data is never transmitted.

How Much Can You Actually Save?

The savings depend on three factors: the size of your list, the duplicate rate, and your mailing frequency.

Example calculation:

Address inventory: 30,000

Duplicate rate: 12%

Duplicates: 3,600

Postage per piece: EUR 0.28 (Dialogpost)

Savings per mailing: EUR 1,008

Mailings per year: 6

Annual savings: EUR 6,048

Add indirect savings: fewer return mailings, more precise response rates, and no duplicate customer contacts that damage your company's image.

Even for smaller inventories, cleaning pays off. With 5,000 addresses, an 8 percent duplicate rate, and four mailings per year, you still save over EUR 400 annually – more than the cost of a professional tool.

Eliminating Duplicate Addresses Systematically

The six steps in summary:

- Take stock – Estimate the duplicate rate and recognize the need for action

- Normalize – Create a uniform format for all fields

- Key fields – Choose the right fields for comparison

- Comparison methods – From exact to phonetic to fuzzy matching

- Merge – Review results and keep the best record

- Ongoing monitoring – Prevent new duplicates rather than just removing old ones

The effort for an initial cleanup is manageable. The annual savings typically exceed the investment from the very first larger mailing. What matters most is not stopping at a one-time cleanup but establishing a recurring process that safeguards data quality in the long run.

Clean your mailing list — try it now

ListenFix uses fuzzy matching to find significantly more duplicates than Excel. 100% offline, GDPR-compliant.

Try for free