Improve Data Quality: A Practical Guide for Businesses

Poor data quality is one of the most expensive mistakes businesses silently accept. Not because they fail to notice the damage, but because it spreads across dozens of processes and rarely shows up as a single line item. Wrong addresses, outdated contacts, inconsistent formatting – the consequences range from duplicate mailings to missed sales opportunities and GDPR fines.

Gartner estimates that the average cost of poor data quality is 12.9 million US dollars per year per organization. Even if only a fraction applies to your company: with an address database of 20,000 entries, return mail, duplicate postage and manual corrections quickly add up to five-figure amounts.

This article explains what data quality actually means, which dimensions to measure and how to improve it sustainably in five steps.

What Data Quality Actually Means

Data quality is not a binary state. It is not about whether your data is "good" or "bad." Rather, data quality describes the extent to which your data is fit for its intended purpose.

For postal mailings, an address must be postally correct and deliverable. For marketing, you additionally need to know whether consent exists. For accounting, the correct company name matters. The same data can be sufficient for one purpose and inadequate for another.

Six dimensions define data quality:

| Dimension | Meaning | Example |

|---|---|---|

| Accuracy | Does the data match reality? | Postal code 70173 actually belongs to Stuttgart |

| Completeness | Are all required fields populated? | An address without a house number is incomplete |

| Consistency | Are identical facts represented identically? | "St." vs. "Street" vs. "Str." |

| Timeliness | Does the data reflect the current state? | Is the address still correct after a move? |

| Uniqueness | Is each entity recorded exactly once? | No duplicates per person |

| Conformity | Does the data comply with formal rules? | German postal codes have exactly 5 digits |

Why Data Quality Deteriorates Over Time

Address databases do not degrade overnight. The decline happens gradually and on multiple levels simultaneously:

Natural decay: In Germany alone, roughly 8.5 million people move each year. For a database with 50,000 contacts, this statistically means about 5,000 addresses become invalid per year – without anyone making a mistake.

Entry errors: Every manual data entry is error-prone. Typos in street names, transposed digits in postal codes, missing umlauts. Studies show that 1 to 4 percent of fields contain errors after manual input.

Source merging: When CRM, newsletter tool and accounting system maintain separate address databases that get merged, duplicates are inevitable. The same customer appears as "Max Müller" in the CRM and "Mueller, Max" in the billing address.

Missing processes: Without defined rules for data entry and regular cleansing, chaos grows with every new record.

Example: Natural decay over 3 years

──────────────────────────────────────

Starting point: 50,000 addresses, 95% correct

After 1 year: 50,000 addresses, ~85% correct (5,000 moves + 500 entry errors)

After 2 years: 50,000 addresses, ~76% correct

After 3 years: 50,000 addresses, ~68% correct

→ Nearly one in three addresses is incorrect after 3 years

The Five Steps to Better Data Quality

Step 1: Assessment – Where Do You Stand?

Before you improve, you need to measure. Pull a sample of 500 to 1,000 records and check them against the six dimensions:

- How many addresses are complete (all required fields populated)?

- How many postal codes are formally correct (5 digits, valid combination)?

- How many duplicates do you find in the sample?

- How many addresses have inconsistent formatting?

Document the results. You need this baseline to measure the impact of your improvements.

Step 2: Normalization – Create Consistent Formats

Normalization brings existing data into a uniform format without changing its content:

| Before | After | Rule |

|---|---|---|

| St., Street, Str. | Street | Resolve abbreviations |

| MÜLLER, Max | Müller, Max | Fix capitalization |

| 0711/1234567 | 07111234567 | Remove special characters |

| " Max Müller " | "Max Müller" | Trim whitespace |

| Dr. med. Max Müller | Max Müller (Title: Dr. med.) | Separate titles |

Normalization is the prerequisite for all subsequent steps. Without consistent formats, any duplicate check produces errors because formatting differences are falsely interpreted as content differences.

Step 3: Identify and Merge Duplicates

Duplicates are the most common and most expensive quality defect. In a typical business database, 8 to 15 percent of all entries are duplicated.

Typical duplicate scenario:

──────────────────────────────

Entry A: Max Müller | Hauptstraße 12 | 70173 Stuttgart

Entry B: Mueller, Max | Hauptstr. 12 | 70173 Stuttgart

Entry C: Dr. Max Müller| Hauptstrasse 12 | 70173 Stuttgart

→ Three entries, one person

→ Three letters per mailing

→ Three times the postage

Reliable duplicate detection combines multiple methods: phonetic algorithms for name variants, fuzzy matching for typos and weighted field comparison for the overall assessment. Learn more about individual methods in our article Detecting Duplicates: 7 Methods for Clean Address Data.

Step 4: Validation – Is the Data Correct?

After normalization and deduplication, it is time for content verification:

Postal code validation: Does the postal code match the stated city? In Germany, there are approximately 8,200 postal codes with clear city assignments. An automated check immediately catches errors like "70173 Munich" (correct: Stuttgart).

Street validation: Does the stated street exist within the postal code area? This method requires up-to-date street directories but can identify many typos and outdated street names.

Format checks: Does the postal code have exactly 5 digits? Does the house number start with a digit? Does the email field contain an @ symbol?

Validation: Sample results

────────────────────────────────

50,000 records checked:

✓ 43,500 valid addresses (87%)

✗ 3,200 postal code-city conflicts (6.4%)

✗ 1,800 missing required fields (3.6%)

✗ 1,500 invalid formats (3%)

Step 5: Establish Processes – Secure Quality Permanently

One-time cleansing is not enough. Without processes, data quality drops back to the old level within months. Three measures make the difference:

Define entry rules: Set required fields, specify input formats, enforce validation at the point of entry. If the CRM system only accepts valid postal codes, no postal code errors can arise.

Regular cleansing cycles: Run a full duplicate check and normalization at least once per quarter. For databases with high input volume, do it monthly.

Return mail management: Every undeliverable letter is a signal. Systematically record returns, mark affected addresses and prioritize them in the next cleansing cycle.

What Poor Data Quality Really Costs

The costs can be calculated using a concrete scenario:

Company: Mid-sized mail-order retailer, 40,000 addresses, monthly mailings via Dialogpost.

| Cost Factor | Calculation | Annual Cost |

|---|---|---|

| Duplicates (12%) | 4,800 × 12 mailings × 0.28 EUR | 16,128 EUR |

| Return mail (6%) | 2,400 × 12 mailings × 0.28 EUR | 8,064 EUR |

| Manual corrections | 200 hrs × 35 EUR/hr | 7,000 EUR |

| Lost leads | est. 2% lower response | hard to quantify |

| Total | >31,000 EUR |

By comparison, the cost of professional cleansing, when done regularly, is only a fraction of that amount.

Data Quality as a Competitive Advantage

Good data quality is not just about cost avoidance. It enables capabilities that simply do not work with bad data:

Personalization: Personalized letters require the name, gender and salutation to be correct. "Dear Mrs. Max Müller" is more embarrassing than no personalization at all.

Segmentation: Regional campaigns, target group analyses and customer scoring all depend on correct data. With 15 percent duplicates, the results of any segmentation are distorted.

Compliance: The GDPR requires the accuracy of personal data (Art. 5(1)(d)). Knowingly working with outdated data risks fines. For details on compliant address processing, see our article on GDPR-compliant address cleansing.

Efficiency: Clean data accelerates every process – from mailing dispatch to invoicing to customer support. Fewer inquiries, fewer manual corrections, less friction.

Tools and Automation

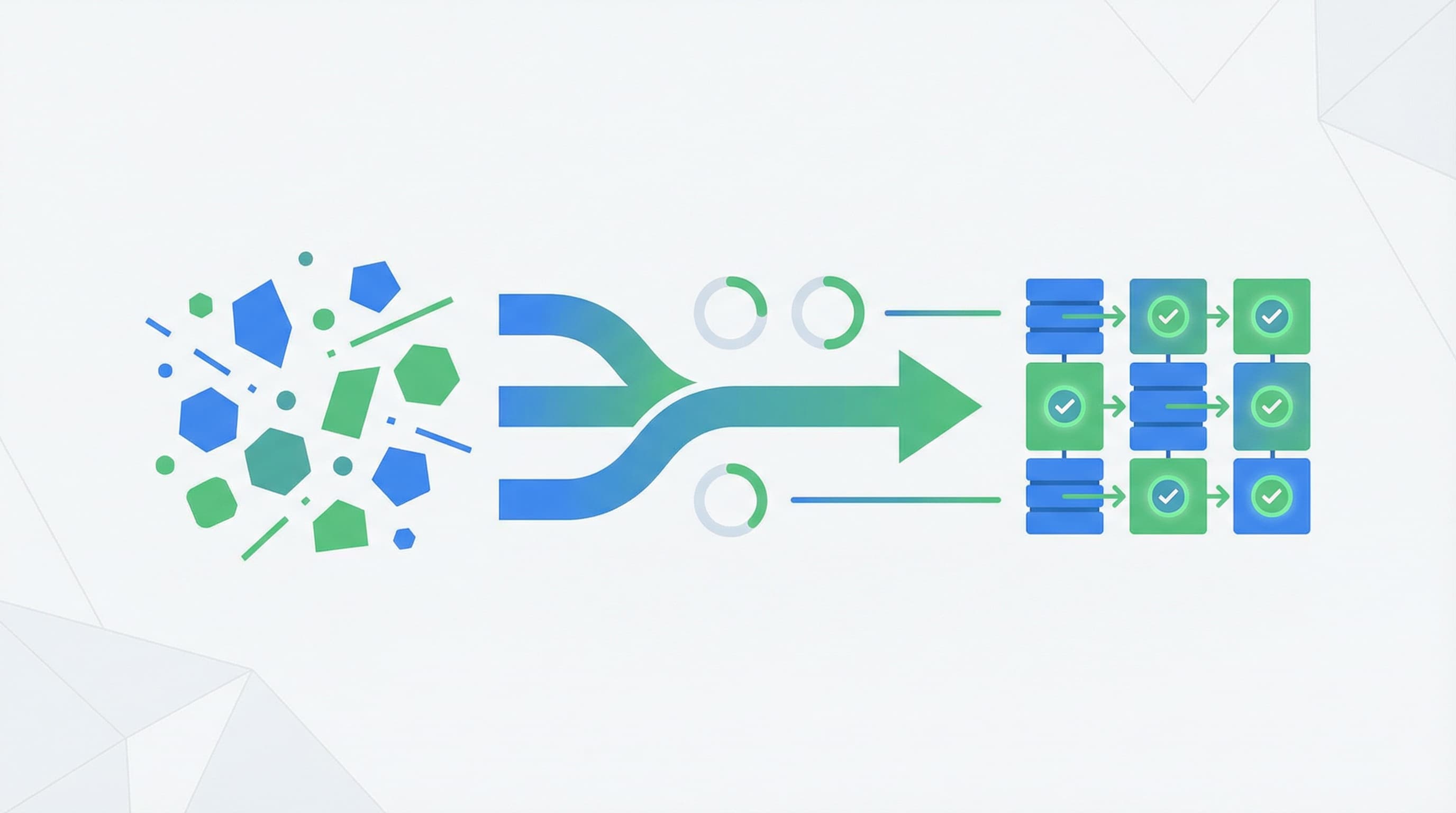

Manually cleansing an address database with tens of thousands of entries is possible but uneconomical. Beyond a certain size, there is no way around automated tools.

Professional data cleansing solutions like ListenFix combine normalization, duplicate detection with five different algorithms and postal code validation in a single pass. The key advantage: all processing happens locally – no address data is transmitted to external servers. For businesses with strict data privacy requirements, this is a decisive benefit.

The workflow is straightforward: upload your CSV or Excel file, map the columns, start the cleansing. Within seconds you receive a cleaned file including a log of all changes.

Data Quality Needs Consistency, Not Perfection

100 percent data quality is an unattainable goal. People move, companies change their names and errors creep in with every entry. The goal is not perfection but a quality level that is sufficient for your business processes – and measures that maintain this level over time.

The most effective strategy: regular small improvements rather than one-off major projects. A quarterly duplicate check followed by normalization keeps your data quality stable. If you additionally define entry rules and systematically evaluate return mail, quality improves with every cycle.

Start with an assessment. Measure where you stand. Then improve step by step – with clear processes and the right tools. For more fundamentals on address cleansing, see our article How to Clean Your Address List: A Complete Guide.

Clean your mailing list — try it now

ListenFix uses fuzzy matching to find significantly more duplicates than Excel. 100% offline, GDPR-compliant.

Try for free